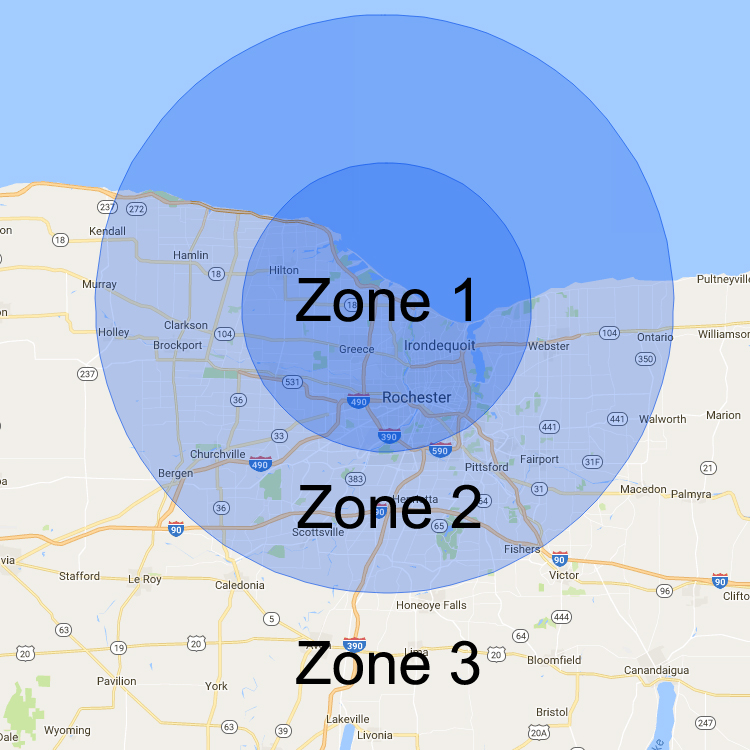

The two implementation examples below show how to integrate rate limiting either via Nginx or Apache. This can be done at the server level, it can be implemented via a programming language or even a caching mechanism. There are various ways to go about actually implementing rate limits. This gives developers the freedom to decrease traffic limits on server A while increasing it on server B (a more commonly used server). Server rate limiting: If a developer has defined certain servers to handle certain aspects of their application then they can define rate limits on a server-level basis.This can be used as a preventative measure to help further reduce the risk of attacks or suspicious activity. For instance, if a developer knows that from midnight to 8:00 am users in a particular region won't be as active, then they can define lower rate limits for that time period. Geographic rate limiting: To further increase security in certain geographic regions, developers can set rate limits for particular regions and particular time periods.

Therefore, if the user exceeds the rate limit, then any further requests will be denied until they reach out to the developer to increase the limit or wait until the rate limit timeframe resets. This associates the number of requests a user is making to their API key or IP (depending on which method you use). User rate limiting: The most popular type of rate limiting is user rate limiting.The section below outlines three different types of rate limiting methods that you can implement. The rate limit method that should be used will depend on what you want to achieve as well as how restrictive you want to be. There are various methods and parameters that can be defined when setting rate limits. In this post, we'll be diving deeper into various types of rate limiting methods, implementation examples, and how rate limiting works in conjunction with Ke圜DN. With rate limiting in place however, these types of errors or attacks are much more manageable. Rate limiting also comes in useful if a particular user on the network makes a mistake in their request, thus asking the server to retrieve tons of information that may overload the network for everyone. The reasoning behind implementing rate limits is to allow for a better flow of data and to increase security by mitigating attacks such as DDoS. If the number of requests you make exceeds that limit, then an error will be triggered. For example, let's say you are using a particular service's API that is configured to allow 100 requests/minute. Rate limiting is used to control the amount of incoming and outgoing traffic to or from a network.